W&B Kubernetes Operator

Use the W&B Kubernetes Operator to simplify deploying, administering, troubleshooting, and scaling your W&B Server deployments on Kubernetes. You can think of the operator as a smart assistant for your W&B instance.

The W&B Server architecture and design continuously evolves to expand AI developer tooling capabilities, and to provide appropriate primitives for high performance, better scalability, and easier administration. That evolution applies to the compute services, relevant storage and the connectivity between them. To help facilitate continuos updates and improvements across deployment types, W&B users a Kubernetes operator.

W&B uses the operator to deploy and manage Dedicated Cloud instances on AWS, GCP and Azure public clouds.

For more information about Kubernetes operators, see Operator pattern in the Kubernetes documentation.

Reasons for the architecture shift

Historically, the W&B application was deployed as a single deployment and pod within a Kubernetes Cluster or a single Docker container. W&B has, and continues to recommend, to externalize the Database and Object Store. Externalizing the Database and Object store decouples the application's state.

As the application grew, the need to evolve from a monolithic container to a distributed system (microservices) was apparent. This change facilitates backend logic handling and seamlessly introduces built-in Kubernetes infrastructure capabilities. Distributed systems also supports deploying new services essential for additional features that W&B relies on.

Before 2024, any Kubernetes-related change required manually updating the terraform-kubernetes-wandb Terraform module. Updating the Terraform module ensures compatibility across cloud providers, configuring necessary Terraform variables, and executing a Terraform apply for each backend or Kubernetes-level change.

This process was not scalable since W&B Support had to assist each customer with upgrading their Terraform module.

The solution was to implement an operator that connects to a central deploy.wandb.ai server to request the latest specification changes for a given release channel and apply them. Updates are received as long as the license is valid. Helm is used as both the deployment mechanism for the W&B operator and the means for the operator to handle all configuration templating of the W&B Kubernetes stack, Helm-ception.

How it works

You can install the operator with helm or from the source. See charts/operator for detailed instructions.

The installation process creates a deployment called controller-manager and uses a custom resource definition named weightsandbiases.apps.wandb.com (shortName: wandb), that takes a single spec and applies it to the cluster:

apiVersion: apiextensions.k8s.io/v1

kind: CustomResourceDefinition

metadata:

name: weightsandbiases.apps.wandb.com

The controller-manager installs charts/operator-wandb based on the spec of the custom resource, release channel, and a user defined config. The configuration specification hierarchy enables maximum configuration flexibility at the user end and enables W&B to release new images, configurations, features, and Helm updates automatically.

For a detailed description of the specification hierarchy, see Configuration Specification Hierarchy and for configuration options, see Configuration Reference.

Configuration Specification Hierarchy

Configuration specifications follow a hierarchical model where higher-level specifications override lower-level ones. Here’s how it works:

- Release Channel Values: This base level configuration sets default values and configurations based on the release channel set by W&B for the deployment.

- User Input Values: Users can override the default settings provided by the Release Channel Spec through the System Console.

- Custom Resource Values: The highest level of specification, which comes from the user. Any values specified here override both the User Input and Release Channel specifications. For a detailed description of the configuration options, see Configuration Reference.

This hierarchical model ensures that configurations are flexible and customizable to meet varying needs while maintaining a manageable and systematic approach to upgrades and changes.

Requirements to use the W&B Kubernetes Operator

Satisfy the following requirements to deploy W&B with the W&B Kubernetes operator:

- Egress to the following endpoints during installation and during runtime:

- deploy.wandb.ai

- docker.io

- quay.io

- gcr.io

- A Kubernetes cluster at least version 1.28 with a deployed, configured and fully functioning Ingress controller (for example Contour, Nginx).

- Externally host and run MySQL 8.0 database.

- Object Storage (Amazon S3, Azure Cloud Storage, Google Cloud Storage, or any S3-compatible storage service) with CORS support.

- A valid W&B Server license.

See this guide for a detailed explanation on how to set up and configure a self-managed installation.

Depending on the installation method, you might need to meet the following requirements:

- Kubectl installed and configured with the correct Kubernetes cluster context.

- Helm is installed.

Air-gapped installations

Note that air-gapped installations are currently not supported by the W&B Kubernetes operator.

W&B is actively working on adding airgapped installation support. For status updates, reach out to Customer Support or your W&B team.

Deploy W&B Server application

This section describes different ways to deploy the W&B Kubernetes operator.

The W&B Operator will become the default installation method for W&B Server. Other methods will be deprecated in the future.

Choose one of the following:

- If you have provisioned all required external services and want to deploy W&B onto Kubernetes with Helm CLI, continue here.

- If you prefer managing infrastructure and the W&B Server with Terraform, continue here.

- If you want to utilize the W&B Cloud Terraform Modules, continue here.

Deploy W&B with Helm CLI

W&B provides a Helm Chart to deploy the W&B Kubernetes operator to a Kubernetes cluster. This approach allows you to deploy W&B Server with Helm CLI or a continuous delivery tool like ArgoCD. Make sure that the above mentioned requirements are in place.

Follow those steps to install the W&B Kubernetes Operator with Helm CLI:

- Add the W&B Helm repository. The W&B Helm chart is available in the W&B Helm repository. Add the repo with the following commands:

helm repo add wandb https://charts.wandb.ai

helm repo update

- Install the Operator on a Kubernetes cluster. Copy and paste the following:

helm upgrade --install operator wandb/operator -n wandb-cr --create-namespace

- Configure the W&B operator custom resource to trigger the W&B Server installation. Create an operator.yaml file to customize the W&B Operator deployment, specifying your custom configuration. See Configuration Reference for details.

Once you have the specification YAML created and filled with your values, run the following and the operator will apply the configuration and install the W&B Server application based on your configuration.

kubectl apply -f operator.yaml

Wait until the deployment is completed and verify the installation. This will take a few minutes.

- Verify the installation. Access the new installation with the browser and create the first admin user account. When this is done, follow the verification steps as outlined here

Deploy W&B with Helm Terraform Module

This method allows for customized deployments tailored to specific requirements, leveraging Terraform's infrastructure-as-code approach for consistency and repeatability. The official W&B Helm-based Terraform Module is located here.

The following code can be used as a starting point and includes all necessary configuration options for a production grade deployment.

module "wandb" {

source = "wandb/wandb/helm"

spec = {

values = {

global = {

host = "https://<HOST_URI>"

license = "eyJhbGnUzaH...j9ZieKQ2x5GGfw"

bucket = {

<details depend on the provider>

}

mysql = {

<redacted>

}

}

ingress = {

annotations = {

"a" = "b"

"x" = "y"

}

}

# important, DO NOT REMOVE

mysql = { install = false }

}

}

}

Note that the configuration options are the same as described in Configuration Reference, but that the syntax has to follow the HashiCorp Configuration Language (HCL). The W&B custom resource definition will be created by the Terraform module.

To see how Weights&Biases themselves use the Helm Terraform module to deploy “Dedicated Cloud” installations for customers, follow those links:

Deploy W&B with W&B Cloud Terraform modules

W&B provides a set of Terraform Modules for AWS, GCP and Azure. Those modules deploy entire infrastructures including Kubernetes clusters, load balancers, MySQL databases and so on as well as the W&B Server application. The W&B Kubernetes Operator is already pre-baked with those official W&B cloud-specific Terraform Modules with the following versions:

| Terraform Registry | Source Code | Version |

|---|---|---|

| AWS | https://github.com/wandb/terraform-aws-wandb | v4.0.0+ |

| Azure | https://github.com/wandb/terraform-azurerm-wandb | v2.0.0+ |

| GCP | https://github.com/wandb/terraform-google-wandb | v2.0.0+ |

This integration ensures that W&B Kubernetes Operator is ready to use for your instance with minimal setup, providing a streamlined path to deploying and managing W&B Server in your cloud environment.

For a detailed description on how to use these modules, refer to this section to self-managed installations section in the docs.

Verify the installation

To verify the installation, W&B recommends using the W&B CLI. The verify command executes several tests that verify all components and configurations.

This step assumes that the first admin user account is created with the browser.

Follow these steps to verify the installation:

- Install the W&B CLI:

pip install wandb

2: Log in to W&B:

wandb login --host=https://YOUR_DNS_DOMAIN

For example:

wandb login --host=https://wandb.company-name.com

- Verify the installation:

wandb verify

A successful installation and fully working W&B deployment shows the following output:

Default host selected: https://wandb.company-name.com

Find detailed logs for this test at: /var/folders/pn/b3g3gnc11_sbsykqkm3tx5rh0000gp/T/tmpdtdjbxua/wandb

Checking if logged in...................................................✅

Checking signed URL upload..............................................✅

Checking ability to send large payloads through proxy...................✅

Checking requests to base url...........................................✅

Checking requests made over signed URLs.................................✅

Checking CORs configuration of the bucket...............................✅

Checking wandb package version is up to date............................✅

Checking logged metrics, saving and downloading a file..................✅

Checking artifact save and download workflows...........................✅

Access the W&B Management Console

The W&B Kubernetes operator comes with a management console. It is located at ${HOST_URI}/console, for example https://wandb.company-name.com/console.

There are two ways to log in to the management console:

- Option 1 (Recommended)

- Option 2

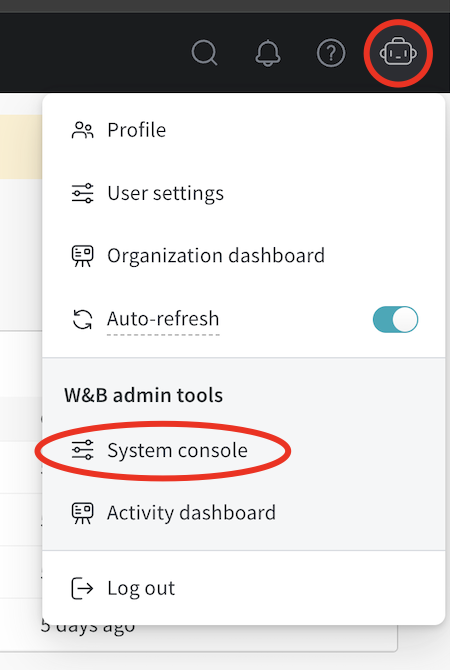

- Open the W&B application in the browser and login. Log in to the W&B application with

${HOST_URI}/, for example https://wandb.company-name.com/ - Access the console. Click on the icon in the top right corner and then click on System console. Note that only users with admin privileges will see the System console entry.

W&B recommends you access the console using the following steps only if Option 1 does not work.

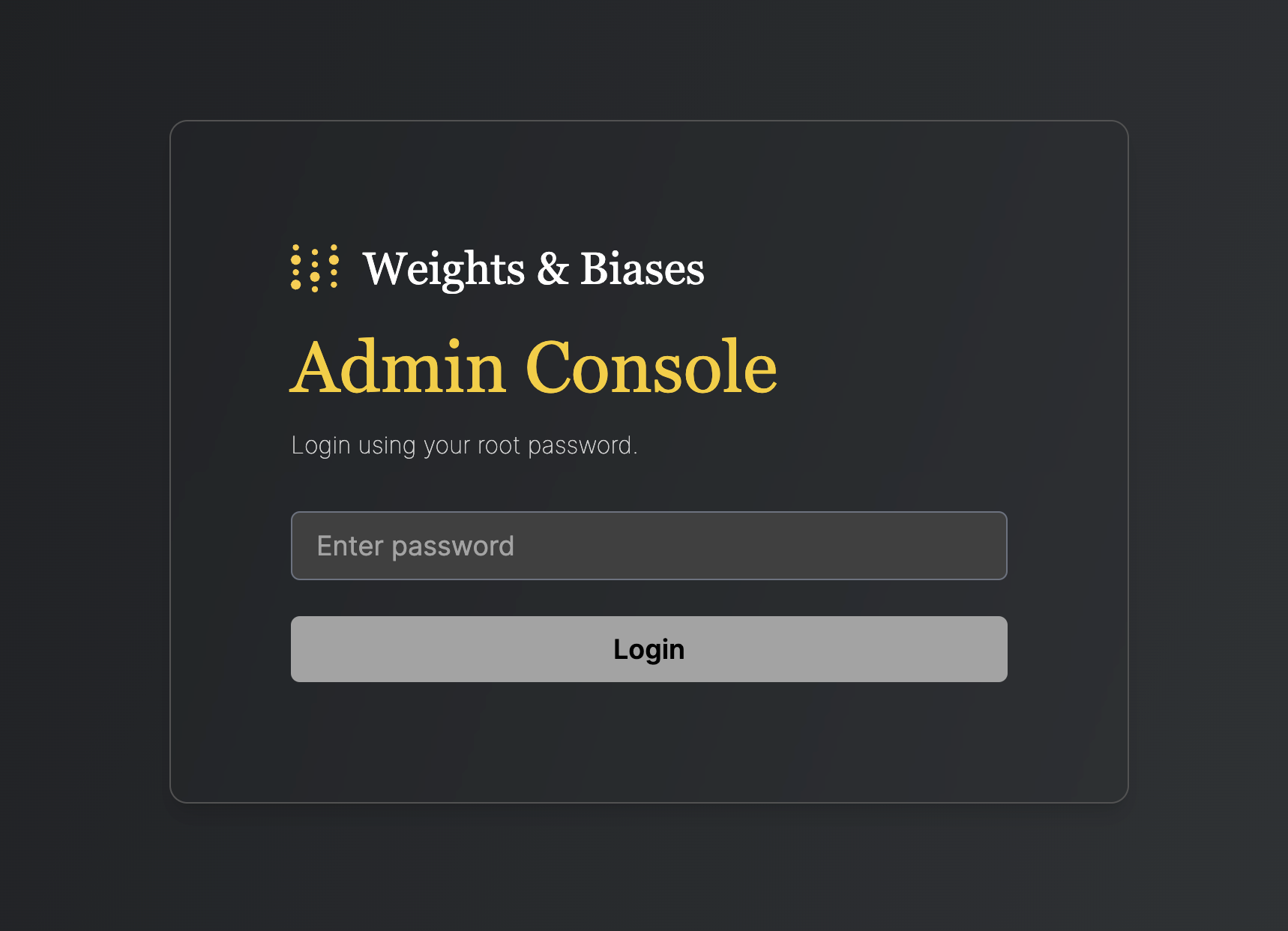

- Open console application in browser. Open the above described URL in the browser and you will be presented with this login screen:

- Retrieve password. The password is stored as a Kubernetes secret and is generated as part of the installation. To retrieve it, execute the following command:

kubectl get secret wandb-password -o jsonpath='{.data.password}' | base64 -d

Copy the password to the clipboard. 3: Login to the console. Paste the copied password to the textfield “Enter password” and click login.

Update the W&B Kubernetes operator

This section describes how to update the W&B Kubernetes operator.

- Updating the W&B Kubernetes operator does not update the W&B server application.

- See the instructions here if you use a Helm chart that does not user the W&B Kubernetes operator before you follow the proceeding instructions to update the W&B operator.

Copy and paste the code snippets below into your terminal.

- First, update the repo with

helm repo update:

helm repo update

- Next, update the Helm chart with

helm upgrade:

helm upgrade operator wandb/operator -n wandb-cr --reuse-values

Update the W&B Server application

You no longer need to update W&B Server application if you use the W&B Kubernetes operator.

The operator automatically updates your W&B Server application when a new version of the software of W&B is released.

Migrate self-managed instances to W&B Operator

The proceeding section describe how to migrate from self-managing your own W&B Server installation to using the W&B Operator to do this for you. The migration process depends on how you installed W&B Server:

The W&B Operator will become the default installation method for W&B Server. In the future, W&B will deprecate deployment mechanisms that do not use the operator. Reach out to Customer Support or your W&B team if you have any questions.

- If you used the official W&B Cloud Terraform Modules, navigate to the appropriate documentation and follow the steps there:

- If you used the W&B Non-Operator Helm chart, continue here.

- If you used the W&B Non-Operator Helm chart with Terraform, continue here.

- If you created the Kubernetes resources with manifest(s), continue here.

Migrate to Operator-based AWS Terraform Modules

For a detailed description of the migration process, continue here.

Migrate to Operator-based GCP Terraform Modules

Reach out to Customer Support or your W&B team if you have any questions or need assistance.

Migrate to Operator-based Azure Terraform Modules

Reach out to Customer Support or your W&B team if you have any questions or need assistance.

Migrate to Operator-based Helm chart

Follow these steps to migrate to the Operator-based Helm chart:

- Get the current W&B configuration. If W&B was deployed with an non-operator-based version of the Helm chart, export the values like this:

helm get values wandb

If W&B was deployed with Kubernetes manifests, export the values like this:

kubectl get deployment wandb -o yaml

In both ways you should now have all the configuration values which are needed for the next step.

Create a file called operator.yaml. Follow the format described in the Configuration Reference. Use the values from step 1.

Scale the current deployment to 0 pods. This step is stops the current deployment.

kubectl scale --replicas=0 deployment wandb

- Update the Helm chart repo:

helm repo update

- Install the new Helm chart:

helm upgrade --install operator wandb/operator -n wandb-cr --create-namespace

- Configure the new helm chart and trigger W&B application deployment. Apply the new configuration.

kubectl apply -f operator.yaml

The deployment will take a few minutes to complete. 7. Verify the installation. Make sure that everything works by following the steps in Verify the installation. 8. Remove to old installation. Uninstall the old helm chart or delete the resources that were created with manifests.

Migrate to Operator-based Terraform Helm chart

Follow these steps to migrate to the Operator-based Helm chart:

- Prepare Terraform config. Replace the Terraform code from the old deployment in your Terraform config with the one that is described here. Set the same variables as before. Do not change .tfvars file if you have one.

- Execute Terraform run. Execute terraform init, plan and apply

- Verify the installation. Make sure that everything works by following the steps in Verify the installation.

- Remove to old installation. Uninstall the old helm chart or delete the resources that were created with manifests.

Configuration Reference

This section describes the configuration options for W&B Server application. The application receives its configuration as custom resource definition named WeightsAndBiases. Many configuration options have been exposed with below configuration, some need to be set as environment variables.

The documentation has two lists of environment variables: Basic and Advanced. Only use environment variables if the configuration option that you need has not yet been exposed with Helm Chart.

The W&B Kubernetes operator configuration file for a production deployment requires the following contents:

apiVersion: apps.wandb.com/v1

kind: WeightsAndBiases

metadata:

labels:

app.kubernetes.io/name: weightsandbiases

app.kubernetes.io/instance: wandb

name: wandb

namespace: default

spec:

values:

global:

host: https://<HOST_URI>

license: eyJhbGnUzaH...j9ZieKQ2x5GGfw

bucket:

<details depend on the provider>

mysql:

<redacted>

extraEnv:

AWS_REGION: "var-must-be-set-despite-not-needed"

ingress:

annotations:

<redacted>

mysql:

# important, DO NOT REMOVE

install: false

This YAML file defines the desired state of your W&B deployment, including the version, environment variables, external resources like databases, and other necessary settings. Use the above YAML as a starting point and add the missing information.

The full list of spec customization can be found here in the Helm repository. The recommended approach is to only change what is necessary and otherwise use the default values.

Complete example

This is an example configuration that uses GCP Kubernetes with GCP Ingress and GCS (GCP Object storage):

apiVersion: apps.wandb.com/v1

kind: WeightsAndBiases

metadata:

labels:

app.kubernetes.io/name: weightsandbiases

app.kubernetes.io/instance: wandb

name: wandb

namespace: default

spec:

values:

global:

host: https://abc-wandb.sandbox-gcp.wandb.ml

bucket:

name: abc-wandb-moving-pipefish

provider: gcs

mysql:

database: wandb_local

host: 10.218.0.2

name: wandb_local

password: 8wtX6cJHizAZvYScjDzZcUarK4zZGjpV

port: 3306

user: wandb

extraEnv:

AWS_REGION: "var-must-be-set-despite-not-needed"

license: eyJhbGnUzaHgyQjQyQWhEU3...ZieKQ2x5GGfw

ingress:

annotations:

ingress.gcp.kubernetes.io/pre-shared-cert: abc-wandb-cert-creative-puma

kubernetes.io/ingress.class: gce

kubernetes.io/ingress.global-static-ip-name: abc-wandb-operator-address

mysql:

install: false

Host

# Provide the FQDN with protocol

global:

# example host name, replace with your own

host: https://abc-wandb.sandbox-gcp.wandb.ml

Object storage (bucket)

AWS

global:

bucket:

provider: "s3"

name: ""

kmsKey: ""

GCP

global:

bucket:

provider: "gcs"

name: ""

Azure

global:

bucket:

provider: "az"

name: ""

secretKey: ""

Other providers (Minio, Ceph, etc.)

For other S3 compatible providers, set the bucket configuration as a environment variable as follows:

global:

extraEnv:

"BUCKET": "s3://wandb:changeme@mydb.com/wandb?tls=true"

The variable contains a connection string in this form:

s3://$ACCESS_KEY:$SECRET_KEY@$HOST/$BUCKET_NAME

You can optionally tell W&B to only connect over TLS if you configure a trusted SSL certificate for your object store. To do so, add the tls query parameter to the url:

s3://$ACCESS_KEY:$SECRET_KEY@$HOST/$BUCKET_NAME?tls=true

This will only work if the SSL certificate is trusted. W&B does not support self-signed certificates.

MySQL

# disable in-chart MySQL

mysql:

install: false

global:

mysql:

# Example values, replace with your own

database: wandb_local

host: 10.218.0.2

name: wandb_local

password: 8wtX6cJH...ZcUarK4zZGjpV

port: 3306

user: wandb

License

global:

# Example license, replace with your own

license: eyJhbGnUzaHgyQjQy...VFnPS_KETXg1hi

Ingress

To identify the ingress class, see this FAQ entry.

Without TLS

global:

# IMPORTANT: Ingress is on the same level in the YAML as ‘global’ (not a child)

ingress:

class: ""

With TLS

Create a secret that contains the certificate

kubectl create secret tls wandb-ingress-tls --key wandb-ingress-tls.key --cert wandb-ingress-tls.crt

Reference the secret in the ingress configuration

global:

# IMPORTANT: Ingress is on the same level in the YAML as ‘global’ (not a child)

ingress:

class: ""

annotations:

{}

# kubernetes.io/ingress.class: nginx

# kubernetes.io/tls-acme: "true"

tls:

- secretName: wandb-ingress-tls

hosts:

- <HOST_URI>

In case of Nginx you might have to add the following annotation:

ingress:

annotations:

nginx.ingress.kubernetes.io/proxy-body-size: 64m

External Redis

redis:

install: false

global

redis:

host: ""

port: 6379

password: ""

parameters: {}

caCert: ""

Alternatively with redis password in a Kubernetes secret:

kubectl create secret generic redis-secret --from-literal=redis-password=supersecret

Reference it in below configuration:

redis:

install: false

global:

redis:

host: redis.example

port: 9001

auth:

enabled: true

secret: redis-secret

key: redis-password

LDAP

Without TLS

global:

ldap:

enabled: true

# LDAP server address including "ldap://" or "ldaps://"

host:

# LDAP search base to use for finding users

baseDN:

# LDAP user to bind with (if not using anonymous bind)

bindDN:

# Secret name and key with LDAP password to bind with (if not using anonymous bind)

bindPW:

# LDAP attribute for email and group ID attribute names as comma separated string values.

attributes:

# LDAP group allow list

groupAllowList:

# Enable LDAP TLS

tls: false

With TLS

The LDAP TLS cert configuration requires a config map pre-created with the certificate content.

To create the config map you can use the following command:

kubectl create configmap ldap-tls-cert --from-file=certificate.crt

And use the config map in the YAML like the example below

global:

ldap:

enabled: true

# LDAP server address including "ldap://" or "ldaps://"

host:

# LDAP search base to use for finding users

baseDN:

# LDAP user to bind with (if not using anonymous bind)

bindDN:

# Secret name and key with LDAP password to bind with (if not using anonymous bind)

bindPW:

# LDAP attribute for email and group ID attribute names as comma separated string values.

attributes:

# LDAP group allow list

groupAllowList:

# Enable LDAP TLS

tls: true

# ConfigMap name and key with CA certificate for LDAP server

tlsCert:

configMap:

name: "ldap-tls-cert"

key: "certificate.crt"

OIDC SSO

global:

auth:

sessionLengthHours: 720

oidc:

clientId: ""

secret: ""

authMethod: ""

issuer: ""

SMTP

global:

email:

smtp:

host: ""

port: 587

user: ""

password: ""

Environment Variables

global:

extraEnv:

GLOBAL_ENV: "example"

FAQ

How to get the W&B Operator Console password

See Accessing the W&B Kubernetes Operator Management Console.

How to access the W&B Operator Console if Ingress doesn’t work

Execute the following command on a host that can reach the Kubernetes cluster:

kubectl port-forward svc/wandb-console 8082

Access the console in the browser with https://localhost:8082/console.

See Accessing the W&B Kubernetes Operator Management Console on how to get the password (Option 2).

How to view W&B Server logs

The application pod is named wandb-app-xxx.

kubectl get pods

kubectl logs wandb-XXXXX-XXXXX

How to identify the Kubernetes ingress class

You can get the ingress class installed in your cluster by running

kubectl get ingressclass